The recent move to publish all peer-reviewed manuscripts as “reviewed preprints” has sparked renewed discussion on transparency and trust in scientific publishing. In this article, Amrapali Datta discusses how open peer review challenges the traditional reliance on Impact Factor metrics and advocates for a culture of accountability, dialogue, and equitable access in science.

Unthinking respect for authority is the greatest enemy of truth”

— Albert Einstein

In late 2024, when eLife announced it would publish every manuscript passing editorial checks as a “reviewed preprint”, it rattled the academic world. For the first time, a major biomedical journal decided that the hidden back-and-forth of peer review should be visible to everyone. Reviews, author responses, and editorial notes would now be accessible to the entire community.

The move unsettled many. Could careers survive without an accept/reject stamp of approval? Clarivate quickly stripped eLife of its Impact Factor (IF), arguing that the new model no longer met the criteria for the Science Citation Index Expanded (SCIE). Yet the journal stood firm, insisting that trust in science depends on openness, not secrecy, and challenging the value of a metric that has long dominated how research is judged.

This clash highlights a deeper question: how should we evaluate scientific work, and how can researchers earn the trust of the societies they serve?

The black box of peer review

At the heart of scientific publishing lies peer review. Experts, mostly anonymous, evaluate the merit and fit of a scientific work to decide whether the paper deserves to be published. Their verdict can shape careers, influence funding, and determine which problems attract attention. Yet this crucial process is hidden from view.

While anonymity shields reviewers from backlash, it conceals bias and systemic problems. Studies have documented disadvantages for women, younger researchers, and those outside elite institutions. Richard Smith, former editor of the British Medical Journal, warned that peer review is “biased against the provincial and those from low and middle-income countries”. For many, rejection feels less like evaluation and more like exclusion.

At its best, peer review sharpens ideas. At its worst, it reinforces hierarchies and leaves those outside the system wondering whether the game is rigged.

The weight of IF

If peer review is the black box, IF of scientific journals has become the shortcut. Created in the 1970s to help librarians choose subscriptions, it measures the average number of citations articles in a journal receive in two years. Originally a practical tool, , it has since ballooned into an all-purpose proxy for scientific quality. A high IF often counts for more than the science itself. Universities use it to evaluate job applicants. Funders use it as a shortcut to gauge quality. This has distorted incentives. Researchers chase “high impact” journals, often sidelining replication studies, negative results, and research on urgent local challenges.

As critics note in the Leiden Manifesto, journal-level metrics are a poor substitute for evaluating individual contributions. Yet despite repeated calls for change, the grip of IF is still strong.

While the Impact Factor dominates how research is evaluated, it reveals little about the quality or fairness of the review process itself.

Opening the doors

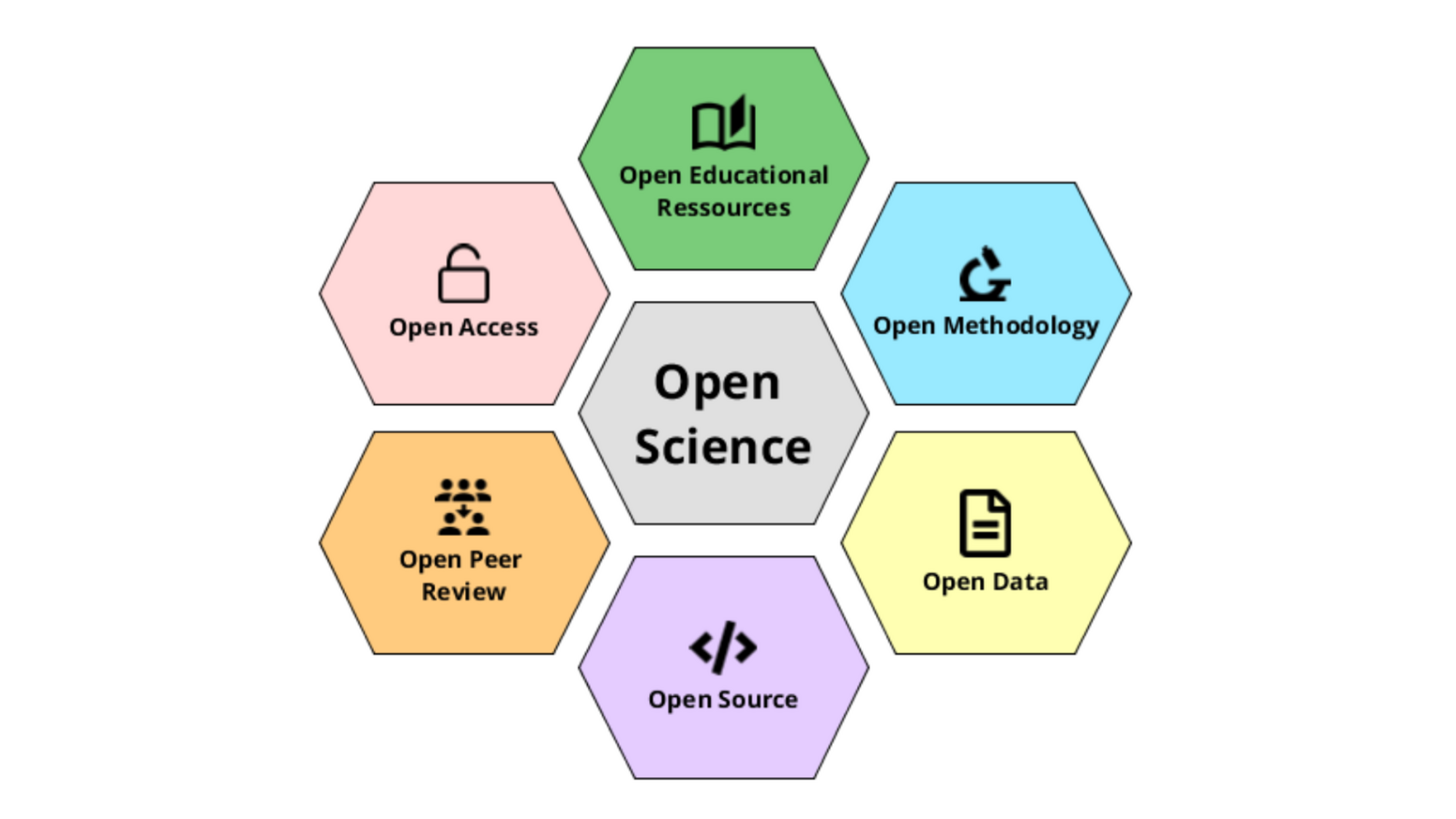

Open peer review emerged as an attempt to fix these flaws. In this model, reviews, author responses, and editorial decisions are public. Readers see not only the polished paper but the debate that shaped it. By making the evaluation transparent, this model allows readers and committees to assess the research on its merits rather than relying solely on the journal’s prestige. Transparency in peer review thus complements broader efforts to move beyond metrics and rebuild trust in science.

Advocates argue this improves accountability, documents the evolution of ideas, and teaches young scientists how critique strengthens research. Organisations like ASAPbio are pushing this further by encouraging review of preprints, even before journals are involved.

Yet adoption has been limited. Many reviewers fear backlash, conflicts of interest, or a lack of protection if their names are attached to strong critiques. A flexible approach may offer a middle ground. At eLife, for example, the arguments are made public, but anonymity is preserved for those who want it. Reviewers can choose to sign their comments, but the decision rests with the individual. This balance allows openness without forcing exposure.

The first time I read an open exchange between authors and reviewers, I was struck by how much it revealed- the probing questions, the pushback, and the way a paper evolved through dialogue.

Making this process visible does not solve every flaw, but it reflects science as it truly unfolds: through debate, revision, and disagreement.

India’s bottleneck

In India, the weight of the Impact Factor is especially heavy. Hiring committees often begin by scanning CVs for “high-impact” publications. Subhash Chandra Lakhotia, a senior zoologist, has put it bluntly: “Impact factor can never be an effective tool in distinguishing good research from bad”.

When I considered sending my first PhD paper to an open review journal, peers warned me against it. Their concern was not about the model itself but about how committees would interpret my CV.

A strong paper in a journal without IF, they argued, might count for little. This hesitation is widespread, and it explains why experiments like eLife’s polarise opinion. Openness attracts support, but existing incentives keep many from embracing it.

Beyond numbers

Numbers are easy to count, but they cannot capture the value of research. The dominance of IF might have narrowed definitions of quality and skewed incentives. Trust in science will not be rebuilt by clinging to metrics, it will come from shifting attention back to substance: the reasoning, critique, and debate that shape science.

Open peer review cannot solve every problem, but it represents a step in that direction. What matters is not whether reviewers reveal their names, but that their arguments are visible. Debate must be public; identity can remain optional.

From journals to society

While this may appear to be an insider squabble within academia, it shapes the science society gets. Public trust in science has never been more contested. From COVID-19 vaccines, where people questioned trial speed, to climate change where consensus collides with politicised denial, public debates hinge on whether the scientific process is fair. The controversies extend to GM crops and drug safety as well. Each dispute reflects anxieties about rigour and transparency.

In other words, how science is judged within academia directly shapes the science society benefits from. When careers are tied to IF, researchers tend to chase “hot” topics over urgent local ones. A young scientist in India might avoid studying soil fertility or air pollution, even though these problems affect millions. A study on dengue vaccine may lose out to CRISPR research, even if the former saves more lives locally. These vital studies rarely attract “high impact” journals, which prefer global trends. Thus, if open peer review gains acceptance, committees could look beyond journal labels and judge the importance and merit of the science itself, but only if willing to invest the time.

What needs to change

For openness to make a difference, institutions must act:

Hiring committees should move beyond IF-based filters and engage directly with the science.

Funders can create incentives by recognising preprints and transparent reviews.

Universities could train PhD students in both giving and receiving peer review, helping them see critique as part of learning rather than gatekeeping.

Researchers must also take the risk of publishing in open-review platforms, signalling that they value transparency over branding.

However, one critical challenge that shadows this transition is the cost of openness. Many open-access or open-review journals require authors to pay Article Processing Charges (APCs), which can be prohibitively expensive, especially for researchers in low- and middle-income countries. While these fees sustain open publishing infrastructures, they risk reinforcing existing inequities, those who can afford to publish are heard, while others remain excluded. For openness to truly democratise science, equitable funding models or institutional support for APCs are essential.

The transition will not be quick. For young researchers like me, choosing openness can feel like a gamble: career safety versus a belief in transparency. But the risk is worth it. If science is to serve society, then society deserves to see not just the answers but the debates that built them.